Should you use Zoom or not?

There has been a lot of controversy & confusion about the security of online Zoom meetings. There have been security experts telling people to not use Zoom, and other tech experts saying they don’t know what all of the fuss is about and that it’s fine to use Zoom. I hope to summarize and clarify a few details about these these general recommendations in this post so that you can make a better informed decision. The source of information presented here comes from my own experience and online references included in this post.

TLDR; I will join Zoom meetings, but I won’t install their software. In my opinion Zoom as a company exhibits a pattern of behavior related to security that makes me not trust the software they produce. This may change in the future, but they’ll have to earn my trust back. In the meantime, there are many alternatives for online meetings from companies that have a history of handling security better than Zoom has.

If you want further reading on this topic, I strongly recommend you read this post by Bruce Schneier, Security and Privacy Implications of Zoom. I have only touched on a few points from his post.

Who has banned Zoom?

There are a number of organizations that have banned Zoom. Some of the companies include: Tesla, SpaceX, Daimler AG, Bank of America, Google (telling employees to use Google Duo instead), Ericsson AB, Smart Communications, and NXP Semiconductors.

Various school districts have also banned the use of Zoom, including New York City’s Dept of Education, Clark County Public Schools in Nevada, and Singapore as a whole.

Then you have the government agencies that have bans in place. This list includes NASA, government agencies in Taiwan, the German foreign ministry, the Australian Defense Force, and the U.S Department of Defense. The US Senate sergeant at arms has told lawmakers’ offices to avoid using Zoom . Even China started blocking Zoom last September.

History of (in)security at Zoom

Why so much fuss over it? Let’s take a look at Zoom’s record.

Uninstallable security hole

July 8, 2019 – Zoom allows a malicious website to enable your camera without permission.

This is when I decided I didn’t want to install Zoom software on any of my devices. In this case, your system was still vulnerable even after uninstalling Zoom. Apple stepped in and silently sent out a patch to fix Zoom’s problem.

March 20, 2020 – Zoombombing – where someone uninvited joins and disrupts your meeting

March 31, 2020 – Zoom doesn’t use end-to-end encryption even though they say it does

April 1, 2020 – It could be used to steal Windows login credentials.

April 2, 2020 – It secretly displayed data from people’s LinkedIn profiles.

April 3, 2020 – It appears that no one at the company has an adequate grasp of cryptography.

Zoom behaves similar to malware

Example #1: Incomplete uninstall process – Have you tried to uninstall malware before? It seems to never go away or cripple your system in the process of getting removed. (See July 28, 2019 above for details)

Example #2: Zoom makes it difficult to join a meeting without installing their software. Try joining a Zoom meeting in your browser. They do everything they can to get you to download and install Zoom before letting you join the meeting. Here is what you have to go through.

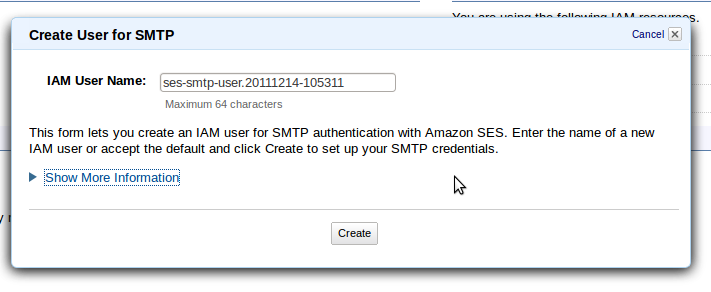

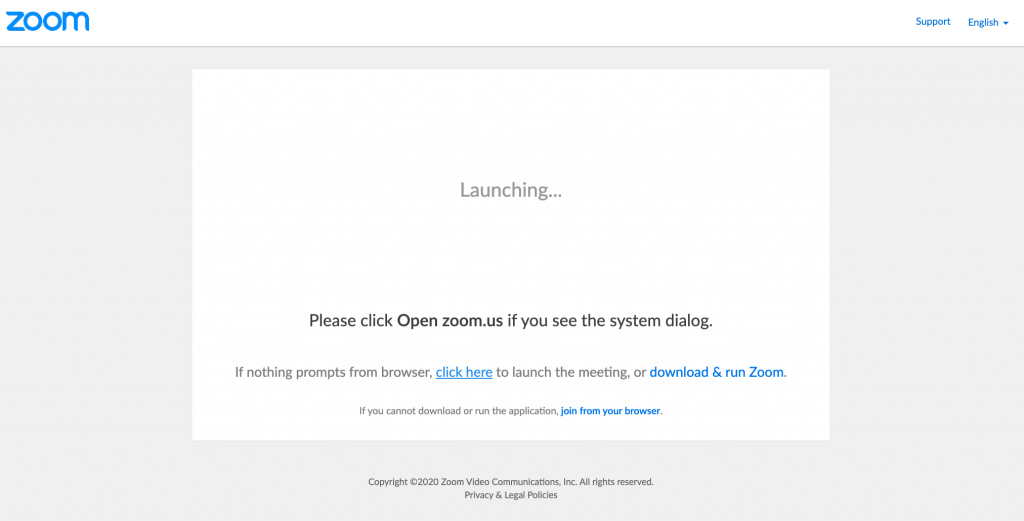

First you click on your meeting link and this comes up. Hey look, the only option is to “download & run Zoom”

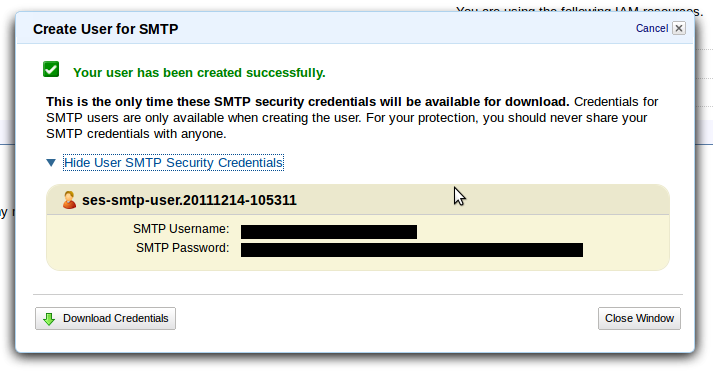

After waiting for a few seconds, you get another prompt to “download & run Zoom” or “launch the meeting” (which means download & run Zoom)

Then, finally clicking on “click here” and waiting a few more seconds, you get a small link to “join from your browser” You’d think they don’t want you do do that!

Recommendations for using Zoom, if you must

- Join Zoom meetings through the browser. Only install the Zoom software if necessary.

- If you are hosting a meeting, set and distribute a password to those that will be attending. Don’t share meeting passwords in public spaces, such as social media.

- Use a waiting room when possible to control who enters your meeting.

In Summary

Should you stop using Zoom completely? Unless you’re discussing state secrets or things that are extremely sensitive in your meetings, I don’t think that is necessary. But here are a few precautions that you can take:

I can hear some people saying, “All software has security holes, Zoom isn’t that bad.” It is true that all software has security holes. But if I have an alternate option that comes from a company with a proven security record, development process that includes security, and quickly patches holes when they’re found, I’ll choose that.

Here are a few alternatives to Zoom:

- Google Meet (formerly Hangouts)

- Microsoft Teams

- Slack

- Cisco Webex

- GoToMeeting

- Skype

- Facetime

- Signal

- Discord

- Jitsi

References:

- https://www.techrepublic.com/article/who-has-banned-zoom-google-nasa-and-more/

- https://fortune.com/2020/04/23/zoom-backlash-daimler-bank-of-america-bans-curbs-security-concerns/

- https://www.cnet.com/news/zoom-security-issues-zoombombings-continue-include-racist-language-and-child-abuse/

- https://www.theguardian.com/world/2020/apr/11/singapore-bans-teachers-using-zoom-after-hackers-post-obscene-images-on-screens

- https://9to5mac.com/2020/04/10/another-zoom-ban/

- https://www.zdnet.com/article/us-senate-german-government-tell-staff-not-to-use-zoom/

(June 15, 2020) Edit to add: On the surface, Zoom has been taking some actions that might improve their security. But they’re still not doing all they can. More info here.

(December 30, 2020) News stories are still coming out about Zoom sharing info. https://mb.ntd.com/zoom-shared-us-user-data-with-beijing_544087.html